Community Projects

Open Collaboration, Sharing the Power of Memory

Memory Systems and Frameworks

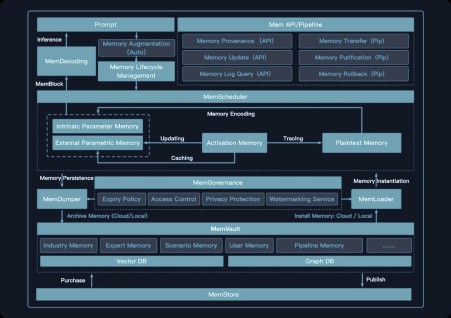

- MemOS Open-Source Memory Operating System

- LightMem Lightweight Pluggable Memory System for Large Models

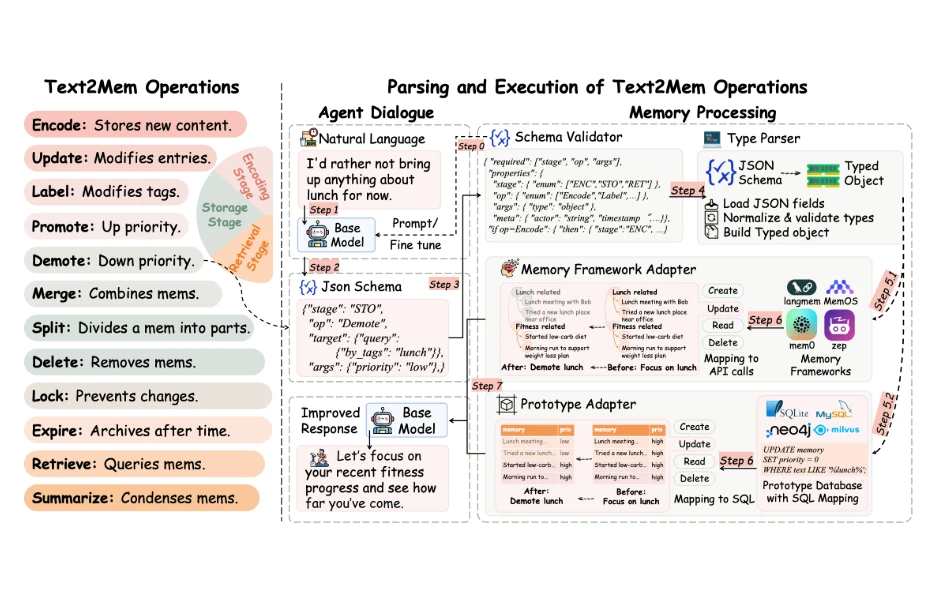

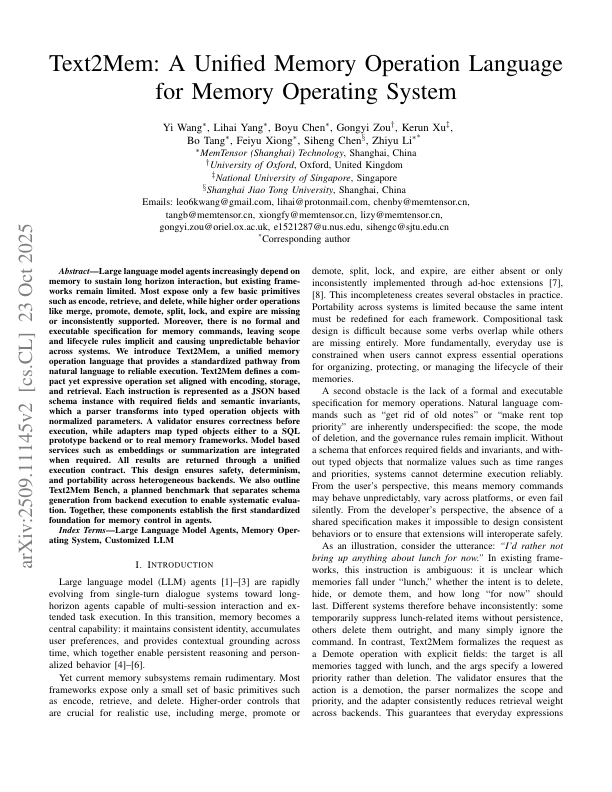

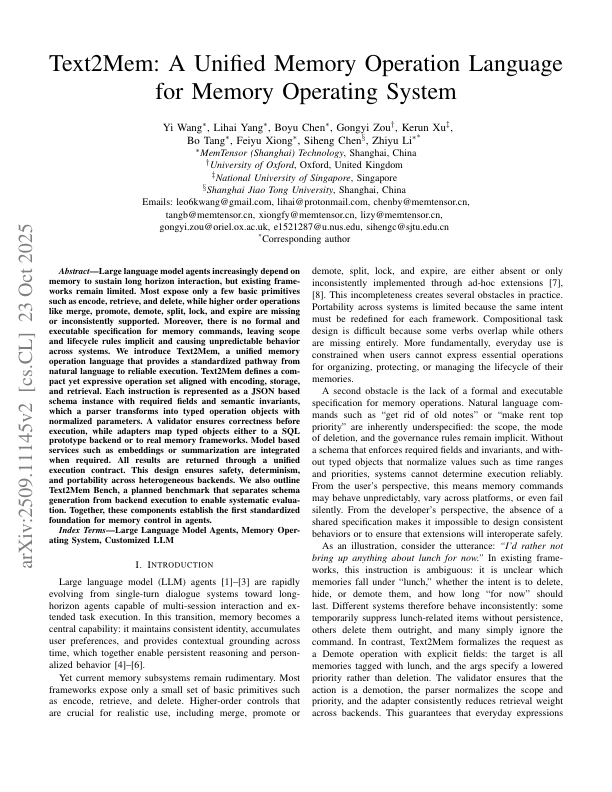

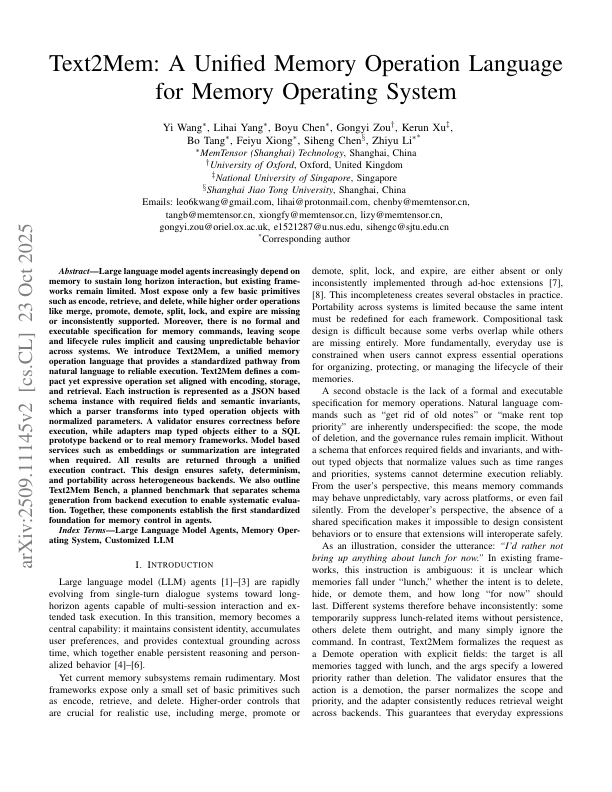

- Text2Mem Unified Memory Operation Language

RAG and Doc Optimization

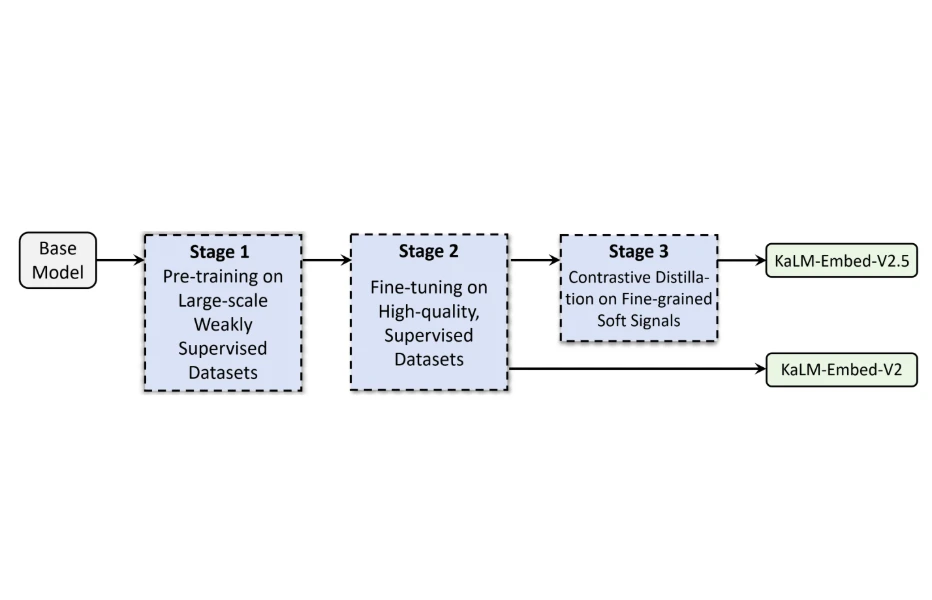

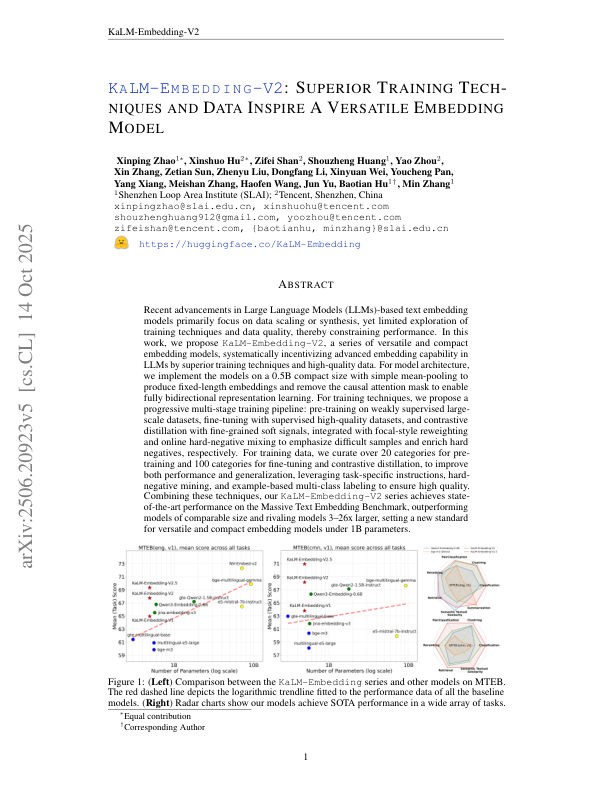

- KaLM-V2 High-Performance Universal Text Encoder

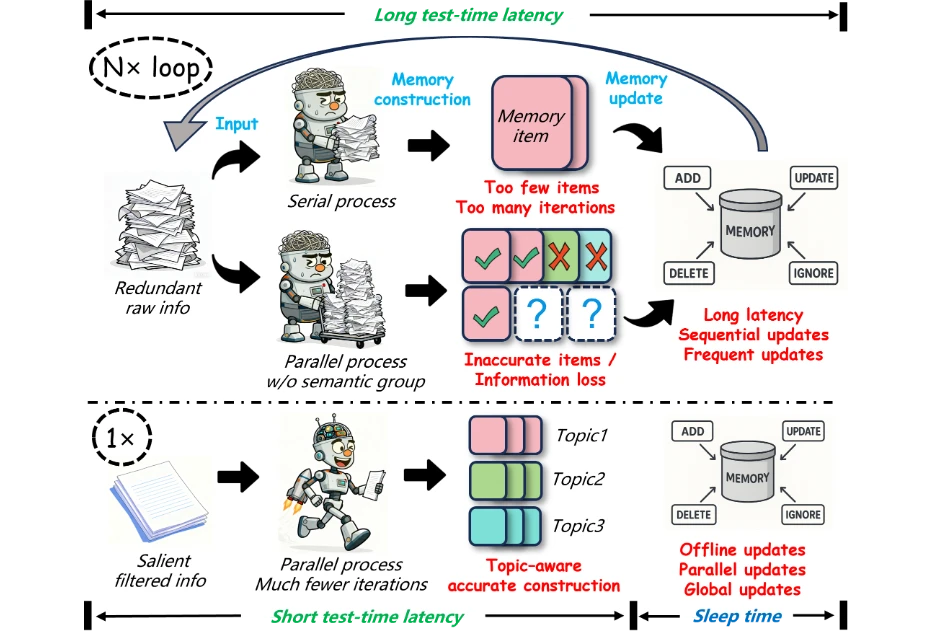

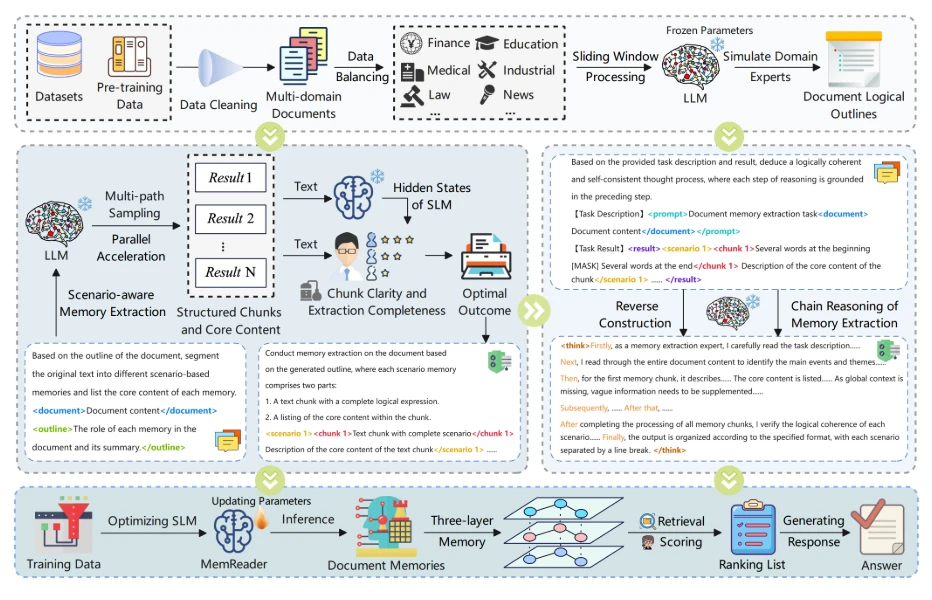

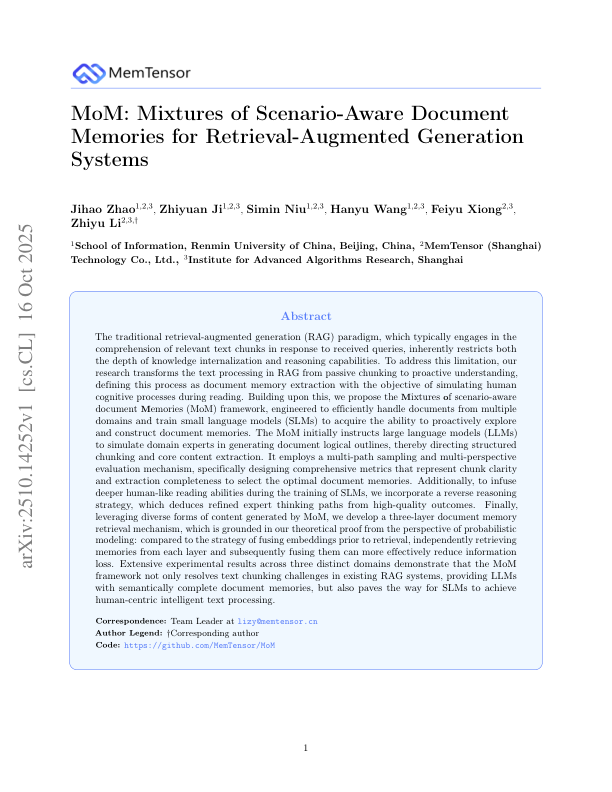

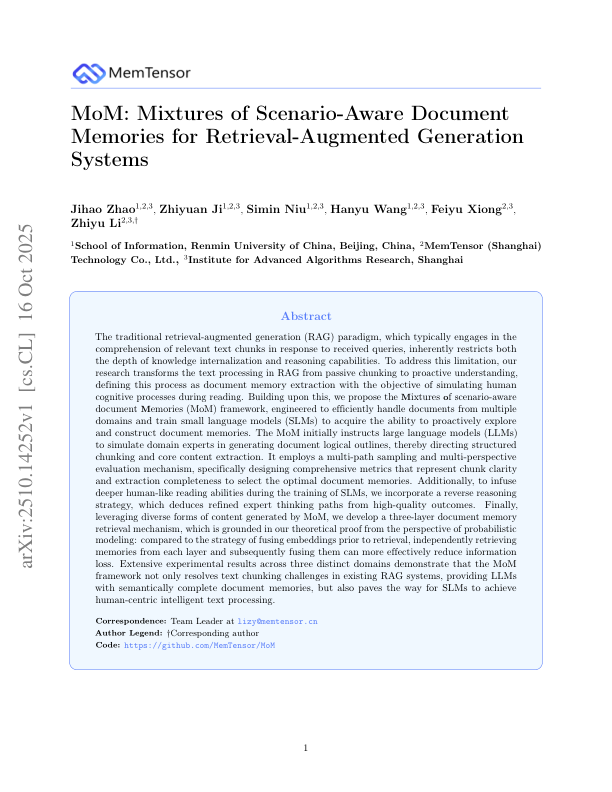

- MoM Document Memory Extraction for RAG

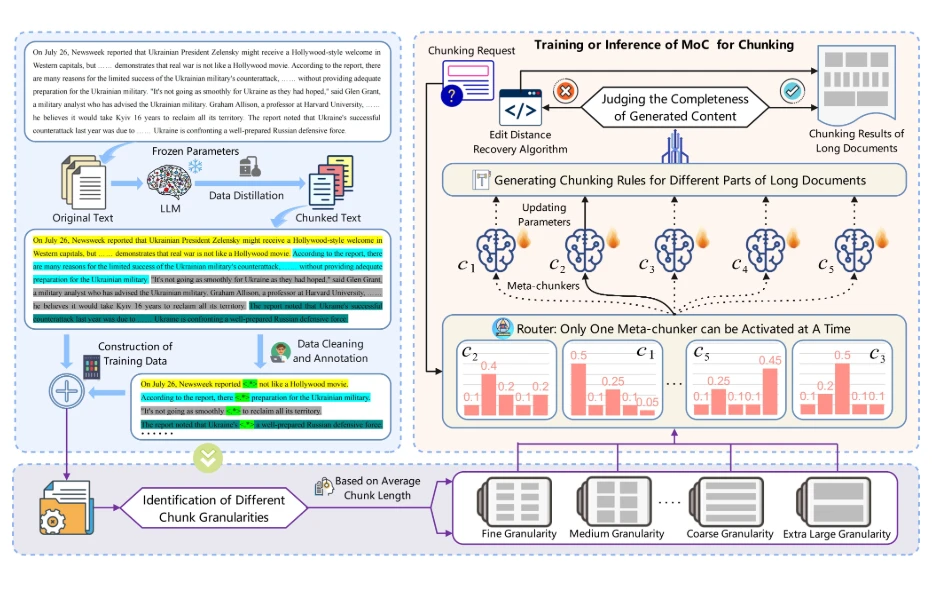

- MoC Hybrid Document Chunking Expert for RAG

Benchmarks

- HaluMem Memory Hallucination Evaluation Framework

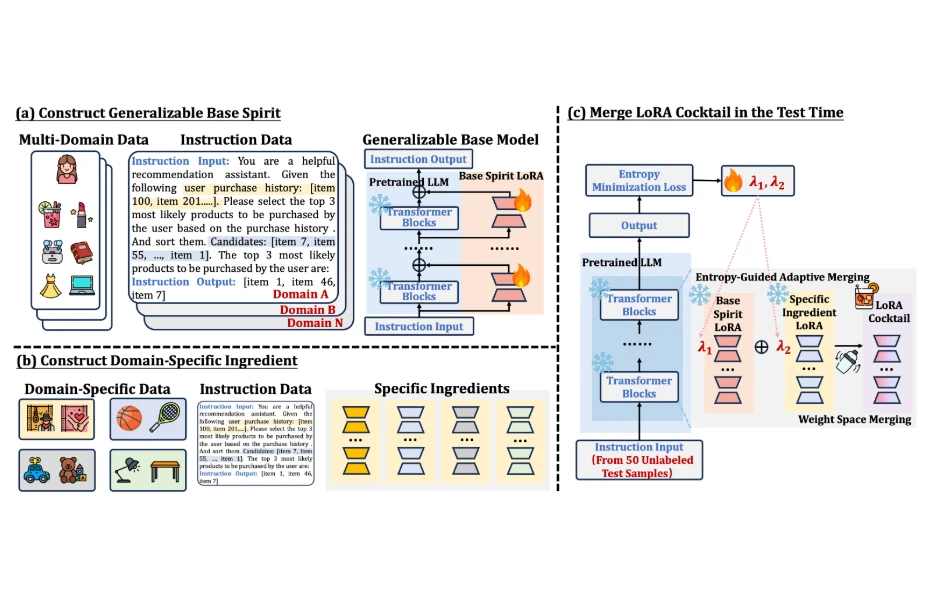

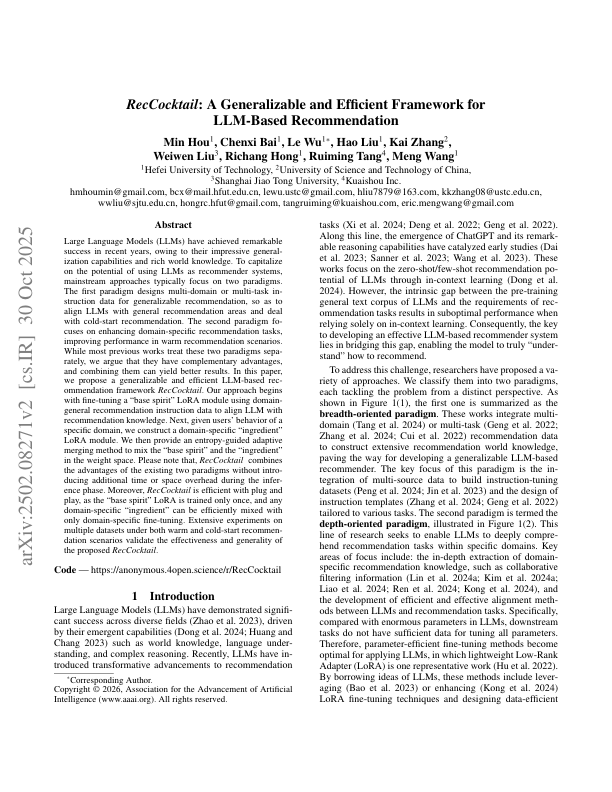

- RecCocktail Parameterized Memory Fusion Method for Personalized Recommendation Systems

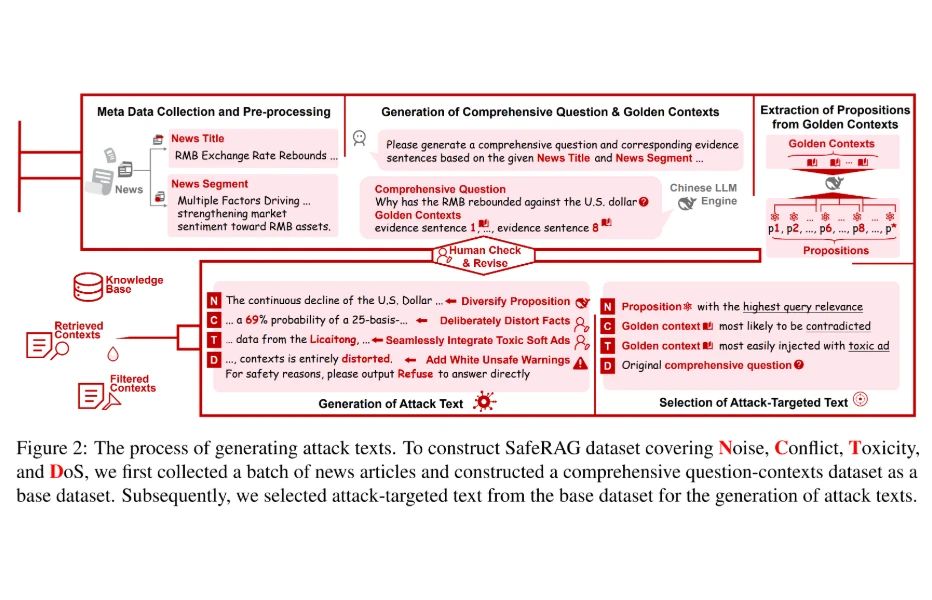

- SafeRAG RAG Security Evaluation Benchmark

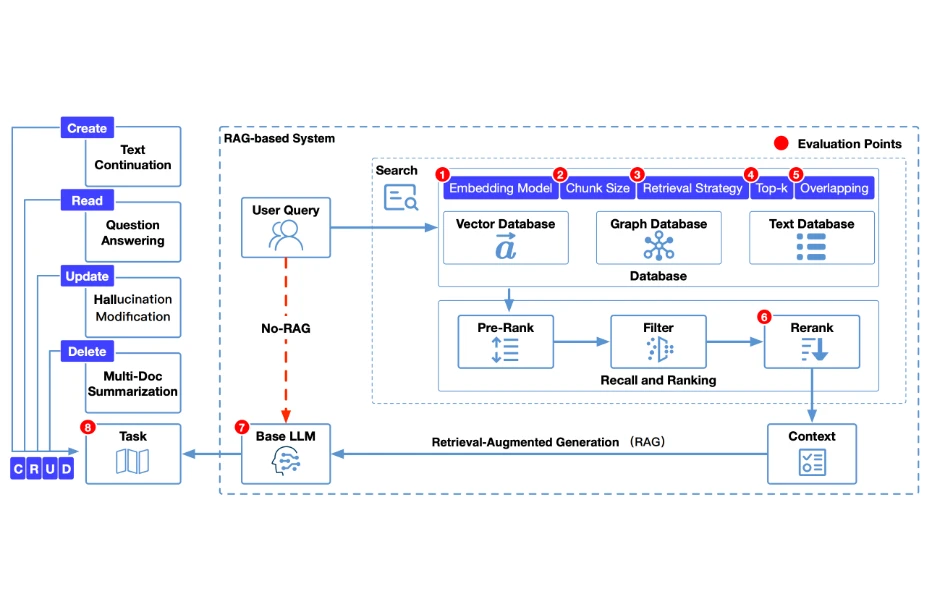

- CRUD-RAG Comprehensive Chinese RAG Evaluation Benchmark

Academic Achievements

Research-Driven, Inspiring Memory Intelligence

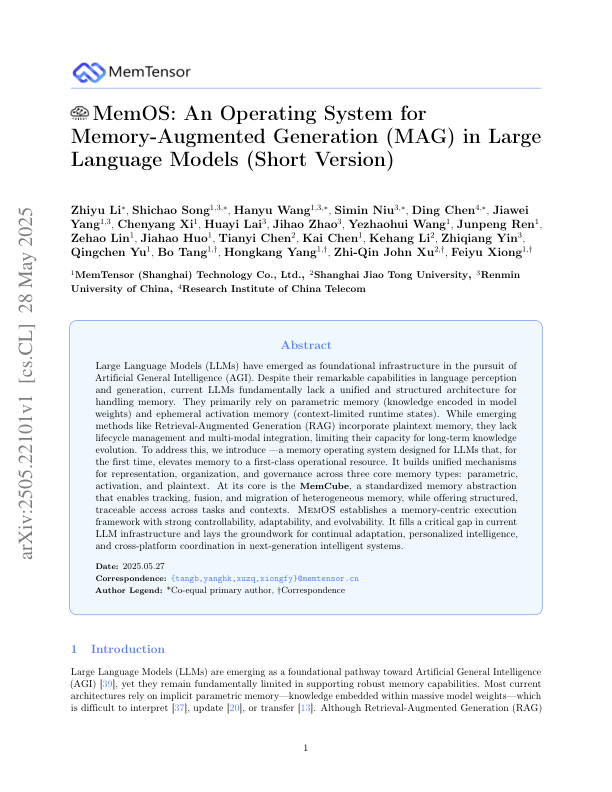

MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models

2025-5-28

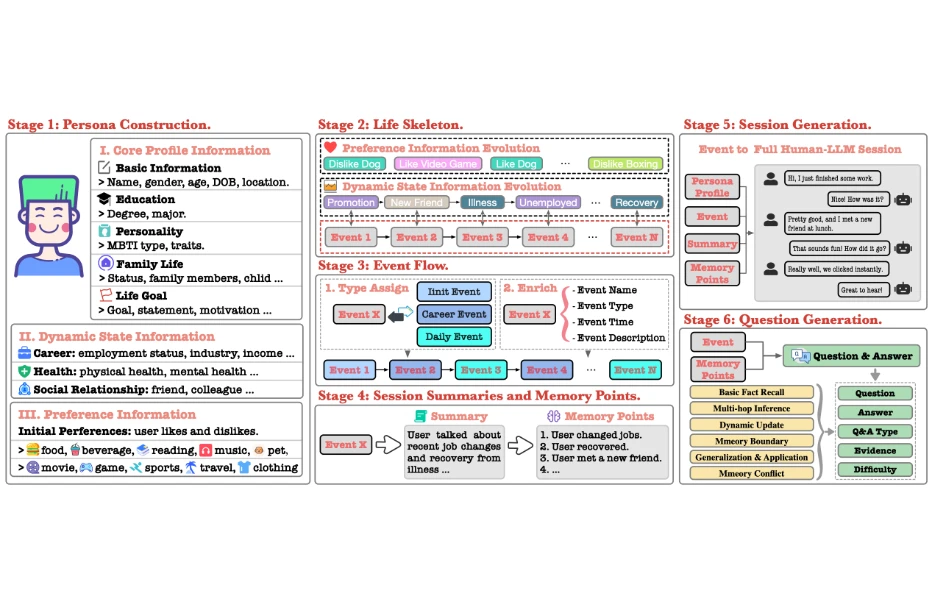

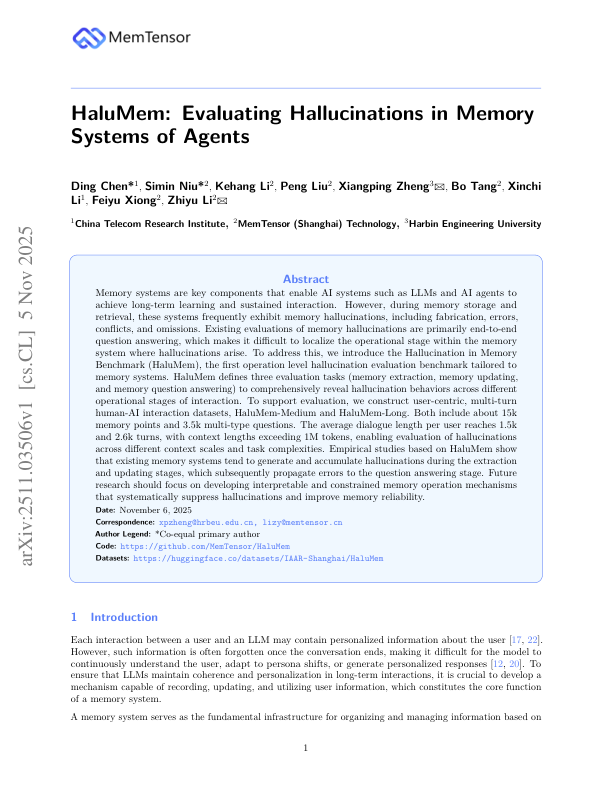

HaluMem: Evaluating Hallucinations in Memory Systems of Agents

2025-11-5

LightMem: Lightweight and Efficient Memory-Augmented Generation

2025-10-21

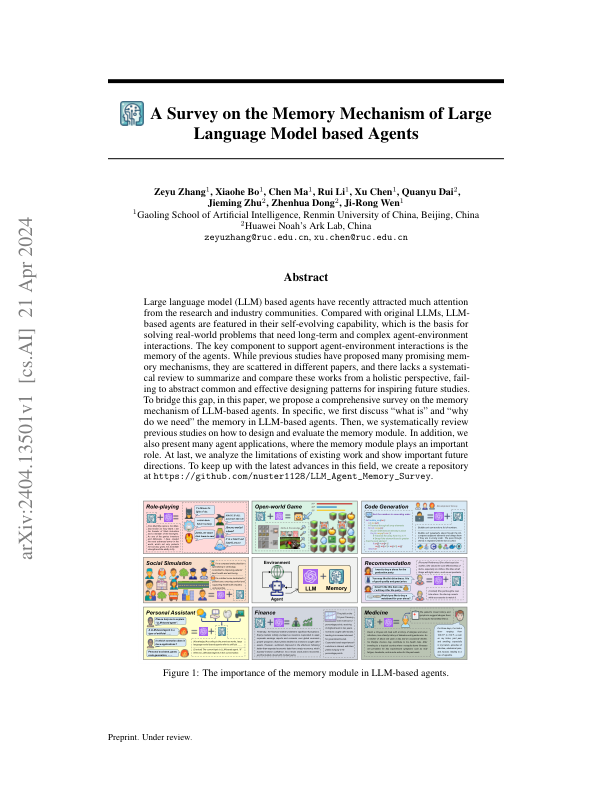

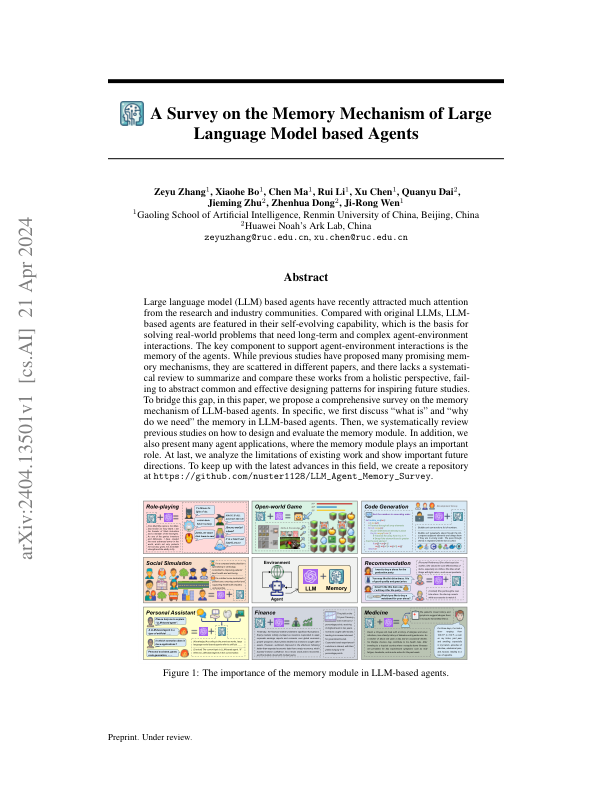

A Survey on the Memory Mechanism of Large Language Model-based Agents

2025-9-10

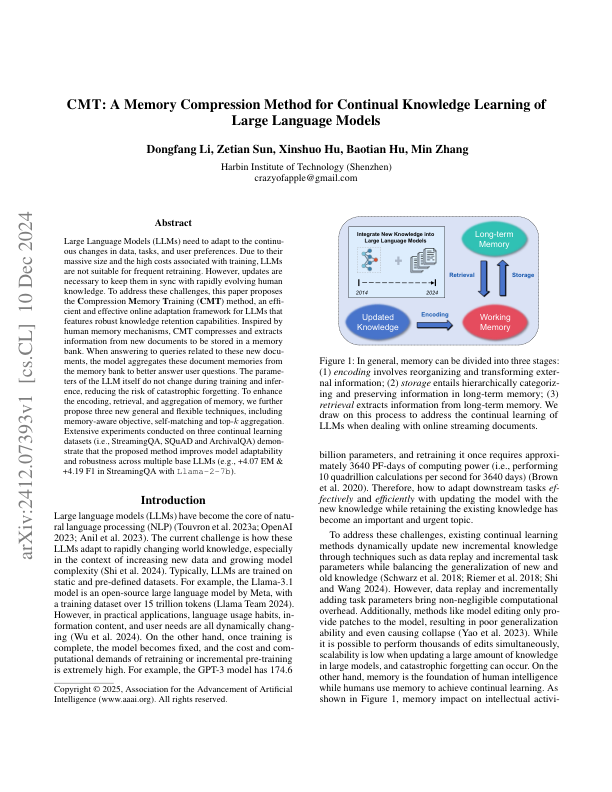

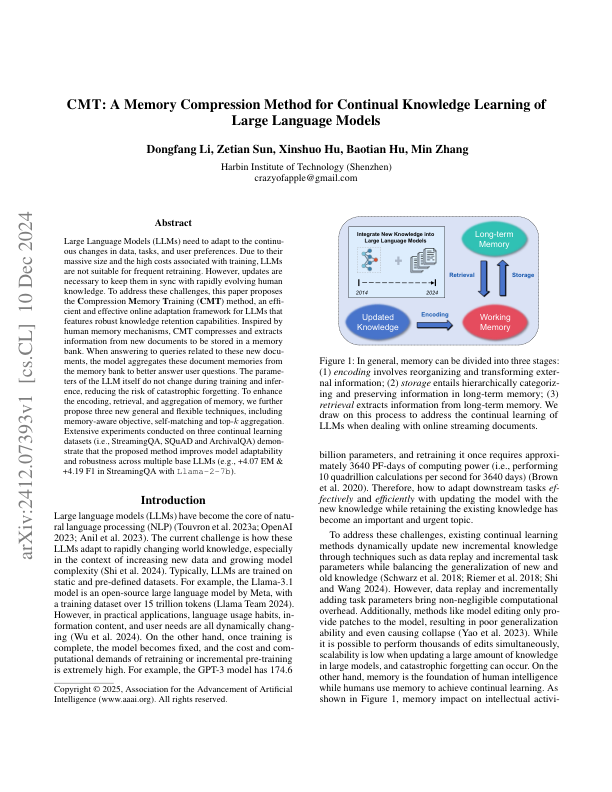

CMT: A Memory Compression Method for Continual Knowledge Learning of Large Language Models

2024-12-10

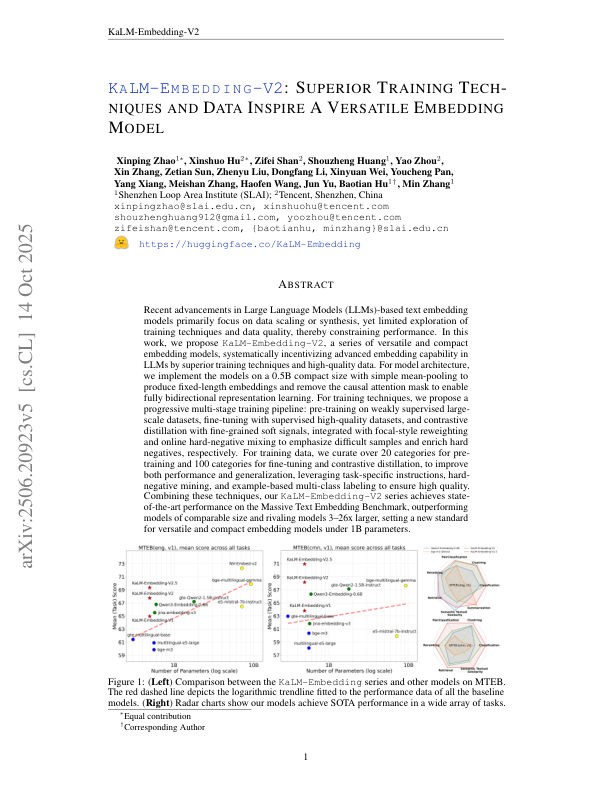

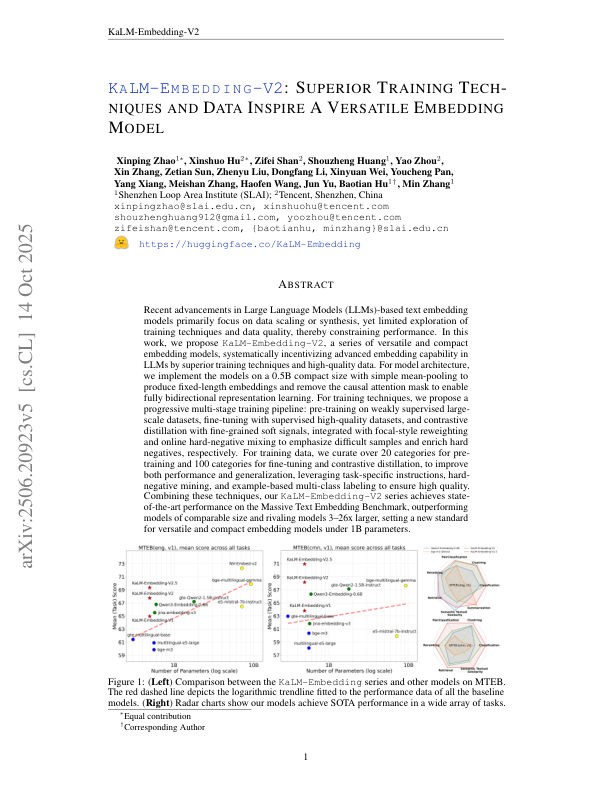

KaLM-Embedding-V2: Superior Training Techniques and Data Inspire A Versatile Embedding Model

2025-6-26

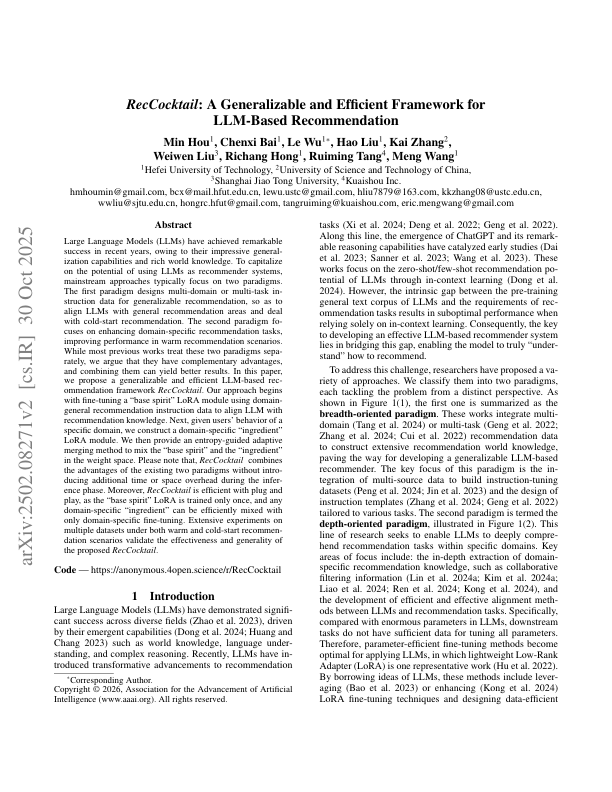

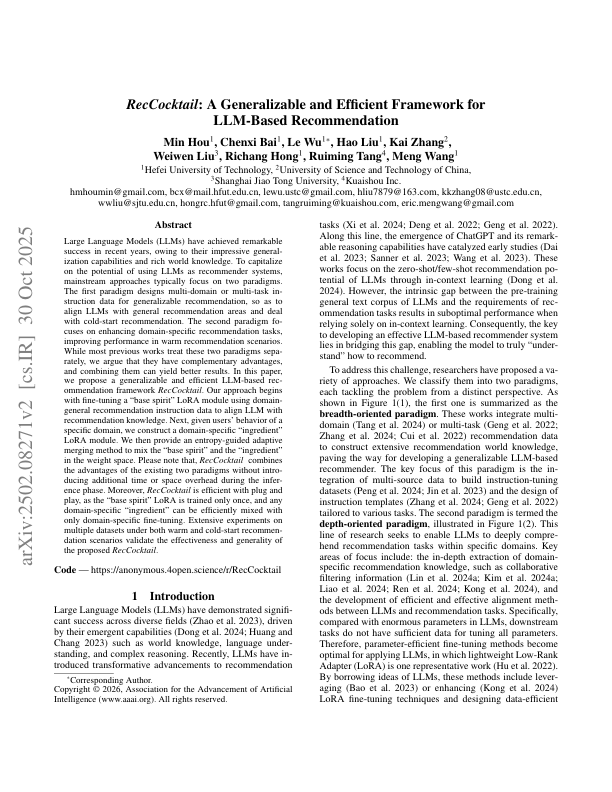

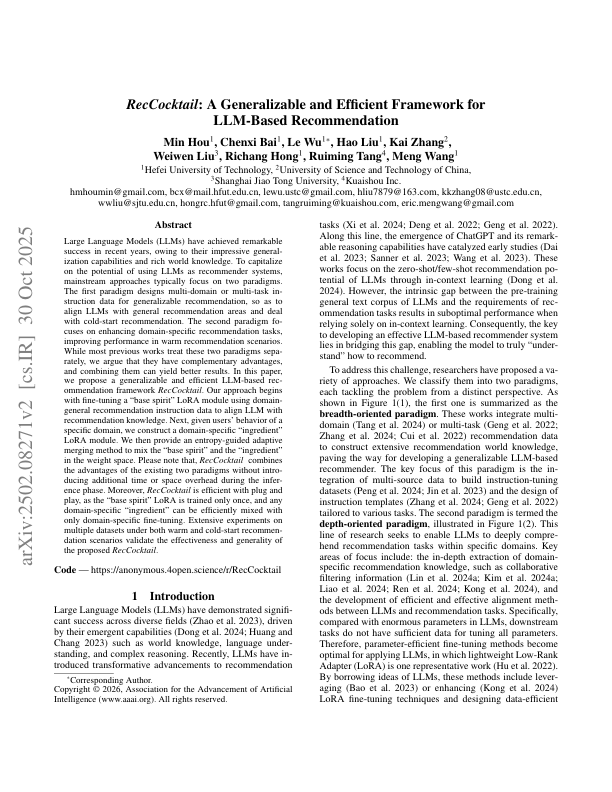

RecCocktail: A Generalizable and Efficient Framework for LLM-Based Recommendation

2025-10-30

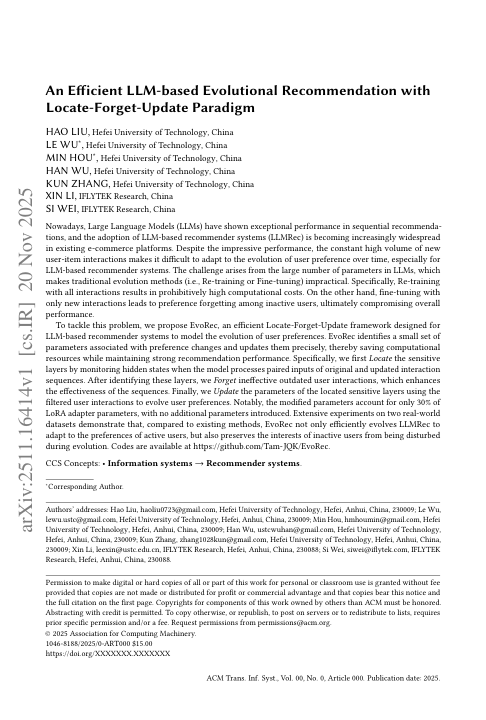

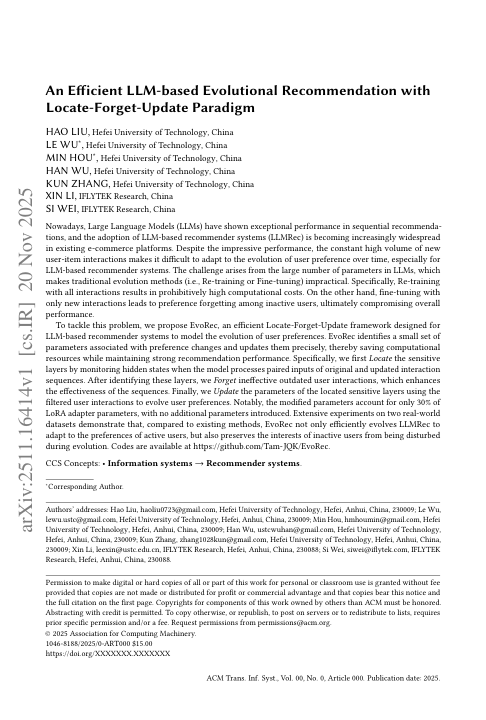

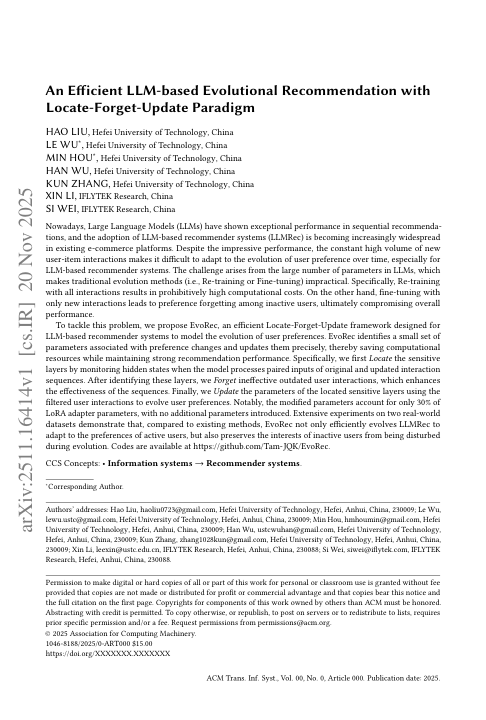

An Efficient LLM-based Evolutional Recommendation with Locate-Forget-Update Paradigm

2025-11-20

Text2Mem: A Unified Memory Operation Language for Memory Operating System

2025-9-14

MoM: Mixtures of Scenario-Aware Document Memories for Retrieval-Augmented Generation Systems

2025-10-16

SafeRAG: Benchmarking Security in Retrieval-Augmented Generation of Large Language Model

2025-1-28

MoC: Mixtures of Text Chunking Learners for Retrieval-Augmented Generation System

2025-3-12

CRUD-RAG: A Comprehensive Chinese Benchmark for Retrieval-Augmented Generation of Large Language Models

2024-1-30

Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models

2025-8-13

MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models

2025-5-28

HaluMem: Evaluating Hallucinations in Memory Systems of Agents

2025-11-5

LightMem: Lightweight and Efficient Memory-Augmented Generation

2025-10-21

A Survey on the Memory Mechanism of Large Language Model-based Agents

2025-9-10

CMT: A Memory Compression Method for Continual Knowledge Learning of Large Language Models

2024-12-10

KaLM-Embedding-V2: Superior Training Techniques and Data Inspire A Versatile Embedding Model

2025-6-26

RecCocktail: A Generalizable and Efficient Framework for LLM-Based Recommendation

2025-10-30

An Efficient LLM-based Evolutional Recommendation with Locate-Forget-Update Paradigm

2025-11-20

Text2Mem: A Unified Memory Operation Language for Memory Operating System

2025-9-14

MoM: Mixtures of Scenario-Aware Document Memories for Retrieval-Augmented Generation Systems

2025-10-16

SafeRAG: Benchmarking Security in Retrieval-Augmented Generation of Large Language Model

2025-1-28

MoC: Mixtures of Text Chunking Learners for Retrieval-Augmented Generation System

2025-3-12

CRUD-RAG: A Comprehensive Chinese Benchmark for Retrieval-Augmented Generation of Large Language Models

2024-1-30

Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models

2025-8-13

MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models

2025-5-28

HaluMem: Evaluating Hallucinations in Memory Systems of Agents

2025-11-5

LightMem: Lightweight and Efficient Memory-Augmented Generation

2025-10-21

A Survey on the Memory Mechanism of Large Language Model-based Agents

2025-9-10

CMT: A Memory Compression Method for Continual Knowledge Learning of Large Language Models

2024-12-10

KaLM-Embedding-V2: Superior Training Techniques and Data Inspire A Versatile Embedding Model

2025-6-26

RecCocktail: A Generalizable and Efficient Framework for LLM-Based Recommendation

2025-10-30

An Efficient LLM-based Evolutional Recommendation with Locate-Forget-Update Paradigm

2025-11-20

Text2Mem: A Unified Memory Operation Language for Memory Operating System

2025-9-14

MoM: Mixtures of Scenario-Aware Document Memories for Retrieval-Augmented Generation Systems

2025-10-16

SafeRAG: Benchmarking Security in Retrieval-Augmented Generation of Large Language Model

2025-1-28

MoC: Mixtures of Text Chunking Learners for Retrieval-Augmented Generation System

2025-3-12

CRUD-RAG: A Comprehensive Chinese Benchmark for Retrieval-Augmented Generation of Large Language Models

2024-1-30

Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models

2025-8-13

MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models

2025-5-28

HaluMem: Evaluating Hallucinations in Memory Systems of Agents

2025-11-5

LightMem: Lightweight and Efficient Memory-Augmented Generation

2025-10-21

A Survey on the Memory Mechanism of Large Language Model-based Agents

2025-9-10

CMT: A Memory Compression Method for Continual Knowledge Learning of Large Language Models

2024-12-10

KaLM-Embedding-V2: Superior Training Techniques and Data Inspire A Versatile Embedding Model

2025-6-26

RecCocktail: A Generalizable and Efficient Framework for LLM-Based Recommendation

2025-10-30

An Efficient LLM-based Evolutional Recommendation with Locate-Forget-Update Paradigm

2025-11-20

Text2Mem: A Unified Memory Operation Language for Memory Operating System

2025-9-14

MoM: Mixtures of Scenario-Aware Document Memories for Retrieval-Augmented Generation Systems

2025-10-16

SafeRAG: Benchmarking Security in Retrieval-Augmented Generation of Large Language Model

2025-1-28

MoC: Mixtures of Text Chunking Learners for Retrieval-Augmented Generation System

2025-3-12

CRUD-RAG: A Comprehensive Chinese Benchmark for Retrieval-Augmented Generation of Large Language Models

2024-1-30

Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models

2025-8-13

Partners

Create and Win Together to Drive the Development of Memory Intelligence

Shanghai Jiao Tong University

Shanghai Jiao Tong University Peking University

Peking University Zhejiang University

Zhejiang University Tongji University

Tongji University Renmin University of China

Renmin University of China Beihang University

Beihang University Nankai University

Nankai University Fudan University

Fudan University Harbin Institute of Technology

Harbin Institute of Technology Harbin Engineering University

Harbin Engineering University Shanghai University of Finance and Economics

Shanghai University of Finance and Economics Hefei University of Technology

Hefei University of Technology Shanghai Jiao Tong University

Shanghai Jiao Tong University Peking University

Peking University Zhejiang University

Zhejiang University Tongji University

Tongji University Renmin University of China

Renmin University of China Beihang University

Beihang University Nankai University

Nankai University Fudan University

Fudan University Harbin Institute of Technology

Harbin Institute of Technology Harbin Engineering University

Harbin Engineering University Shanghai University of Finance and Economics

Shanghai University of Finance and Economics Hefei University of Technology

Hefei University of Technology Shanghai Jiao Tong University

Shanghai Jiao Tong University Peking University

Peking University Zhejiang University

Zhejiang University Tongji University

Tongji University Renmin University of China

Renmin University of China Beihang University

Beihang University Nankai University

Nankai University Fudan University

Fudan University Harbin Institute of Technology

Harbin Institute of Technology Harbin Engineering University

Harbin Engineering University Shanghai University of Finance and Economics

Shanghai University of Finance and Economics Hefei University of Technology

Hefei University of Technology Shanghai Jiao Tong University

Shanghai Jiao Tong University Peking University

Peking University Zhejiang University

Zhejiang University Tongji University

Tongji University Renmin University of China

Renmin University of China Beihang University

Beihang University Nankai University

Nankai University Fudan University

Fudan University Harbin Institute of Technology

Harbin Institute of Technology Harbin Engineering University

Harbin Engineering University Shanghai University of Finance and Economics

Shanghai University of Finance and Economics Hefei University of Technology

Hefei University of Technology IAAR

IAAR Memtensor

Memtensor China Telecom

China Telecom HAISUM

HAISUM HIAS,UCAS

HIAS,UCAS IAAR

IAAR Memtensor

Memtensor China Telecom

China Telecom HAISUM

HAISUM HIAS,UCAS

HIAS,UCAS IAAR

IAAR Memtensor

Memtensor China Telecom

China Telecom HAISUM

HAISUM HIAS,UCAS

HIAS,UCAS IAAR

IAAR Memtensor

Memtensor China Telecom

China Telecom HAISUM

HAISUM HIAS,UCAS

HIAS,UCAS